Every week, I speak with executives who tell me the same thing with the same mixture of pride and quiet unease: “We’re investing heavily in AI.” They can list the pilots. They can name the vendors. They can point to the task force, the steering committee, the innovation lab with the glass walls and the whiteboards covered in Post-it notes.

What they cannot always do – and this is the part that keeps them awake – is tell me how it connects, how the pieces add up, and most importantly, how that translates to organizational value that can be seen in the bottom line. What the organisation is optimising for, and whether the AI investments are serving that goal or simply orbiting it at a respectful, inconclusive distance.

There is a difference between having AI initiatives and having an AI strategy. That difference, in my experience, is worth tens of millions of dollars and several years of competitive position. And the window to close that gap is narrowing. The organisations getting this right now are not waiting for perfect conditions – they moved at least three to four years ago (many much longer), and the distance between them and the field is growing every quarter.

The reason it is hard to see from the inside is not a failure of intelligence. It is a failure of diagnosis. And you cannot fix what you have not correctly identified.

What the confident diagnosis usually looks like

In most engagements, the leadership team begins with a clear working hypothesis about what is constraining progress.

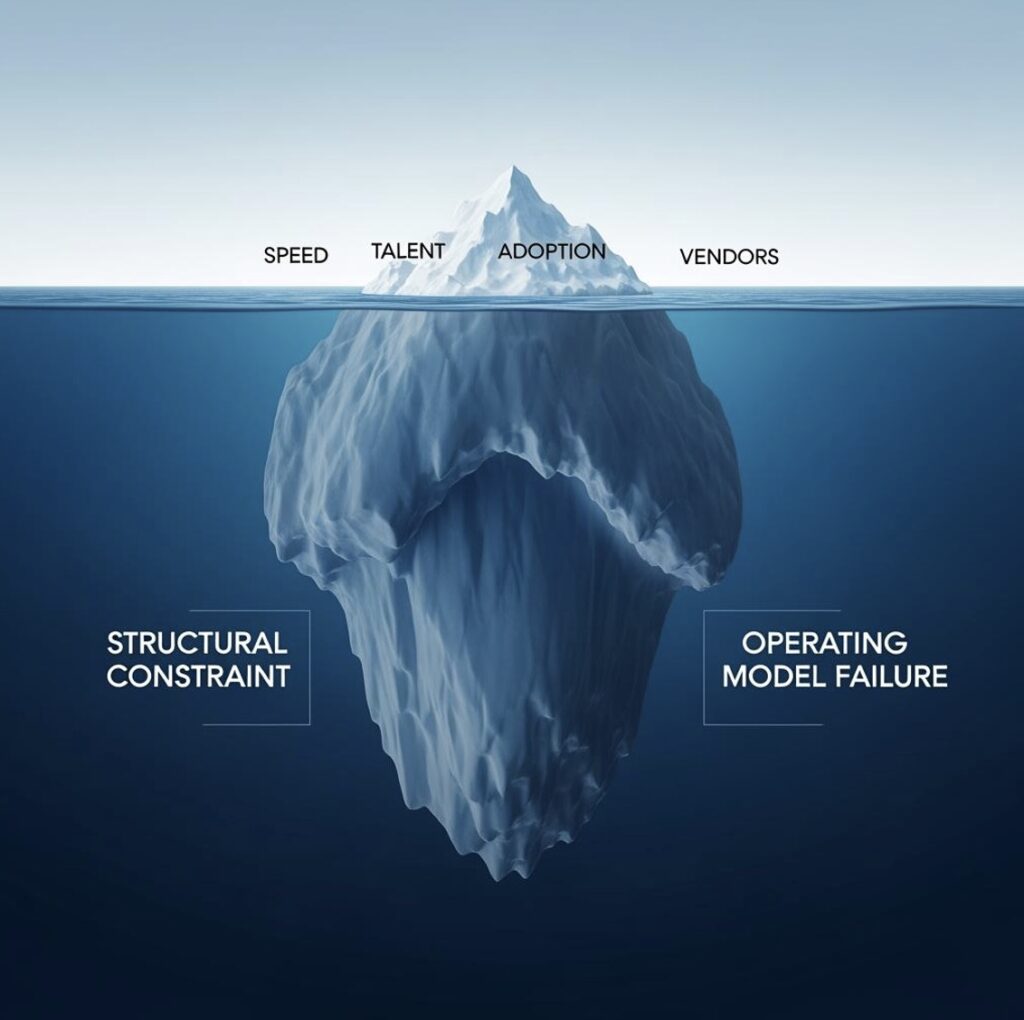

Most often, that hypothesis falls into one of four categories:

1. The speed hypothesis:

“We are not moving quickly enough. The technology is evolving faster than the organisation can absorb it.”

This typically leads to investment in agile delivery models, faster procurement processes, and innovation structures designed to accelerate execution. These are not misguided investments. More often, however, they address a visible constraint rather than the primary ones.

2. The talent hypothesis:

“We do not yet have the right people. We need more data scientists, more machine learning engineers, and more leaders who can bridge scientific, technical, and commercial priorities.”

This is often a valid observation. But it is less often the central reason value is not being realised.

3. The adoption hypothesis:

“Our people are not using the tools. This is a change management issue involving culture, training, and communication.”

This is perhaps the most persuasive diagnosis because adoption challenges are real in most organisations. The mistake is in treating low adoption as the root cause rather than as an indicator of a deeper structural issue.

4. The vendor hypothesis:

“We selected the wrong partners. The tools are not fit for purpose in our environment.”

This is occasionally true. More often, however, it reflects an understandable tendency to attribute the problem externally when the more significant constraint sits within the organisation’s own operating model.

These hypotheses feel credible because they are usually directionally correct. Low adoption is real. Execution may indeed be too slow. Talent may be stretched. Vendor performance may be uneven. But in many cases these are downstream effects of a more fundamental structural constraint.

The distinction matters. Treating effects as causes leads organisations to optimise around symptoms rather than resolve the conditions producing them. It is the difference between managing repeated blockage and correcting the fault in the system itself.

Why intelligent teams misdiagnose their own AI constraints

Structural problems rarely present themselves clearly. They surface through secondary effects that can easily be mistaken for the primary issue.

When an AI initiative struggles to move from pilot to production, it can appear to be an adoption issue, a change management challenge, or a limitation in the technology itself. In one sense, all of those interpretations are reasonable: each is influenced by the underlying structural constraint. The symptom is genuine. The diagnosis it points to is often incomplete.

A second dynamic makes misdiagnosis more likely. The people closest to the programme are often the most committed to the current explanation of the problem. Revising that diagnosis can require acknowledging that substantial time, capital, and leadership attention have been directed toward the wrong intervention.

At senior levels, that is not a trivial admission. It is, however, often the necessary starting point for meaningful progress.

The four structural conditions that actually constrain AI value – and how to recognise them in your own organisation

Across over a decade of AI strategy engagements in life sciences (mainly pharma and biotech) – spanning R&D operations, clinical, regulatory, medical affairs, market access, commercial, and integrated global enterprises, I have found that the failure to realise value from AI investment can usually be traced to one or more of three structural conditions.

They manifest differently from one organisation to another. Their underlying mechanics, however, are remarkably consistent.

1. The value prioritisation gap

A structural condition often precedes and compounds the other three, and because it is rarely framed as a strategic failure it is almost never treated as one: the absence of a rigorously prioritised, financially modelled view of where AI would most move the needle for the business.

In most organisations, pilots are selected because they address a genuine and visible pain point, e.g. a bottleneck in medical information response times, friction in field force planning, a manual process in regulatory intelligence. The work typically succeeds on its own terms. The pilot solves what it was commissioned to solve. The difficulty is that “a real pain point” is not the same as “the pain point that materially changes the economics of the business.” In a budget-constrained environment, the two are often mutually exclusive.

Capital and executive attention expended on a genuine but second-order problem is capital and attention no longer available for the first-order opportunity – the one that would have shifted revenue, margin, cycle time, or competitive position at a scale the organisation could defend at board level.

Without a strategy grounded in full financial modelling of impact and one that ranks candidate use cases by their quantified value contribution, rather than by their visibility, sponsor enthusiasm, or ease of execution, portfolios tend to optimise toward tractable problems rather than consequential ones. Effort is expended, pilots are delivered, and each initiative is defensible in isolation. The cumulative business impact, however, rarely comes close to justifying the aggregate investment.

The diagnostic pattern is recognisable from the outside: a steady cadence of successful pilots, strong internal narratives of progress, and yet no clear line of sight between the portfolio and the two or three strategic outcomes the business is actually trying to achieve. In these cases, the issue is rarely that the wrong pilots were built. It is that the right ones were never chosen.

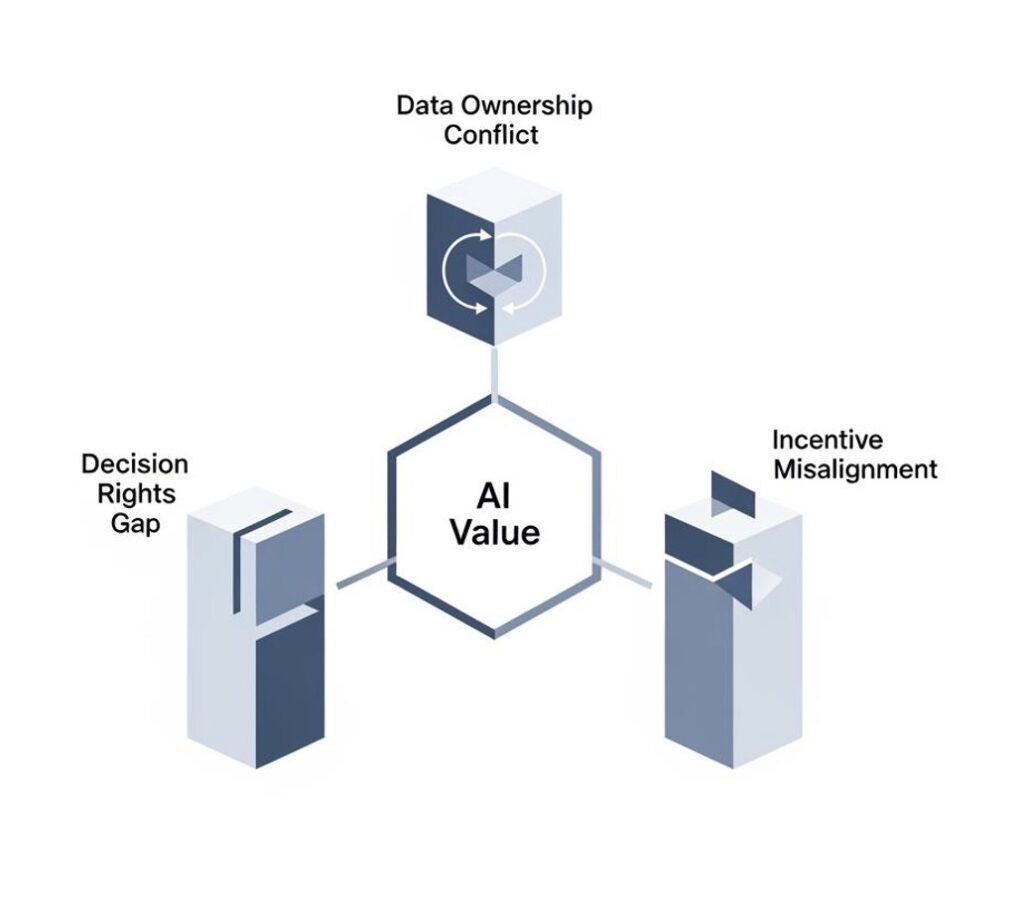

2. The decision rights gap

In many life sciences organisations, the authority to initiate an AI pilot is clearly defined. A business unit, digital function, or innovation team can usually sponsor a controlled experiment without difficulty. The authority to scale that pilot into an operational capability is far less clear.

This is rarely the result of negligence. More often, it is a predictable consequence of how AI programmes were originally established: as innovation initiatives, governed and funded outside the core operating model, with accountability structures designed for experimentation rather than enterprise deployment. The difficulty arises when organisational ambition evolves from “test and learn” to “transform the business,” but the governance model (and the organizational model) does not evolve with it.

The consequence is an invisible barrier between pilot and production. Business units assume scale-up is a central decision. Digital functions assume it requires business sponsorship, budget ownership, or both. Legal and compliance may hold an informal veto, exercised inconsistently through risk reviews that were never fully integrated into governance. Procurement may apply approval processes built before the organisation had a clear view of what AI procurement would require.

In these environments, no single function is obstructing progress. Each is acting within a legitimate remit. Yet the cumulative effect is highly predictable: promising pilots are not formally rejected; they are deferred. Over time, they accumulate in a pipeline that signals momentum while producing limited enterprise value.

The diagnostic pattern is usually clear once examined closely: a portfolio of pilots that are widely viewed as successful, strong vendor feedback, genuine stakeholder enthusiasm, and yet a production deployment rate that is materially lower than the pilot volume would suggest. When pilots work and still do not scale, the issue is rarely the pilot themselves. The issue is the transition.

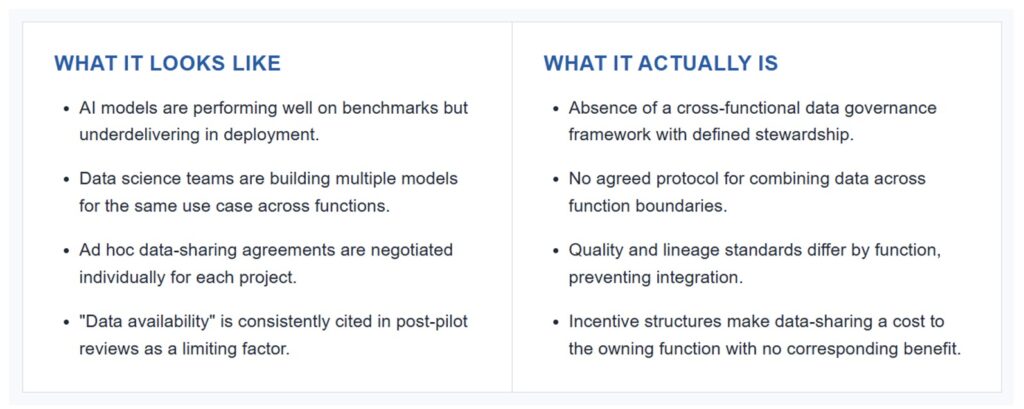

3. The data ownership conflict

Life sciences organisations hold proprietary data assets of exceptional strategic value: real-world evidence generated through operations, longitudinal field force data, market access histories, and patient journey insights developed through medical affairs and related functions.

In a well-structured AI environment, these assets become a durable source of competitive advantage. They are difficult to replicate, expensive to rebuild, and often impossible for a new entrant to acquire.

In practice, however, these assets are frequently only partially available to the teams responsible for building AI solutions. This is not usually because the data is absent, nor because system access is technically impossible. It is because multiple functions have legitimate governance interests in the same datasets, and no effective framework exists to reconcile those interests in a way that allows data to be combined, queried, and used at the pace AI development requires.

Commercial teams may govern field force data because it reflects operating performance and customer relationships. Medical affairs may govern interaction data because of HCP engagement and compliance considerations. Regulatory may control real-world evidence under frameworks designed for static analysis, not dynamic model development. Finance may govern historical performance data because of its implications for forecasting and revenue recognition.

Each of these positions is reasonable in isolation. Together, they often create an environment in which AI teams use the data that is easiest to access rather than the data that is most valuable.

That distinction matters. Models can be technically sophisticated and still strategically underpowered if they are trained on a partial, non-differentiating subset of the organisation’s information. The result is often an AI capability that appears advanced in form but is limited in practical advantage.

The commercial implications are especially significant in applications such as territory optimisation, next-best-action models, and patient identification. In these cases, the difference between a model trained on integrated proprietary data and one trained on external proxies is often the difference between true commercial leverage and an expensive approximation of what a capable analyst could achieve manually.

4. The incentive architecture misalignment

This is the structural condition most often underestimated, one of the least likely to be resolved through the interventions organisations typically prefer, and importantly, the one that sits furthest downstream. It cannot be addressed in isolation, because its resolution depends on the prior three being substantially in hand.

When AI adoption is low, the standard response is usually change management: communication campaigns, training, executive sponsorship, champion networks, and adoption dashboards. These interventions are not without value. They address a real and visible issue.

The problem arises when low adoption is treated primarily as a behavioural problem rather than as a rational response to the incentive environment.

Consider the position of a high-performing medical science liaison or a top-quartile commercial leader. They are being asked to alter established workflows, incorporate AI-generated recommendations, capture information in new ways to improve model performance, and rely on outputs they may not yet fully trust or interrogate. In return, the promised benefit is typically improved productivity or better decision support over time.

From their perspective, the calculation is straightforward. The cost of adoption is immediate, visible, and personal. The benefit is delayed, uncertain in scale, and often realised primarily at the organisational level rather than in the individual’s performance assessment.

That is not resistance to change. It is a rational response to the system in which they operate.

The appropriate intervention is therefore not limited to training or communication. It is the redesign of the performance environment so that AI-enabled productivity is recognised, measured, and aligned with individual incentives. That means coordination across HR, finance, business leadership, and operational management. It often requires changes to compensation structures, quota design, management reporting, and performance frameworks that have developed over many years and carry significant institutional weight.

There is, however, a sequencing point that is frequently missed. Incentive redesign cannot sensibly precede the resolution of the upstream conditions. An organisation cannot meaningfully restructure a field-based role’s performance framework around AI-enabled productivity until it has determined which use cases actually warrant that investment (the value prioritisation question), established that the chosen capabilities can in fact be scaled rather than left in pilot (the decision rights question), and secured the data access required to make those capabilities commercially differentiated rather than generic (the data ownership question). Incentive redesign undertaken before these are resolved tends to embed performance expectations around tools that may not scale, capabilities that may not survive contact with the data environment, or use cases whose economic contribution was never rigorously established in the first place. The organisation then finds itself re-engineering compensation and performance frameworks a second time, at considerable cost to both credibility and goodwill.

In practical terms, this makes the issue particularly difficult to resolve through the AI programme alone. It cannot be piloted away. It cannot usually be delegated to a digital team. It requires executive sponsorship, cross-functional authority, a willingness to change systems that sit well beyond the AI agenda itself — and the discipline to address it in the right sequence rather than the most visible one.

That is precisely why it is so often left unaddressed, or addressed prematurely. The leaders with the authority to resolve it may not recognise it as the real issue, because the visible symptom (.e. low adoption) has already been attributed to something more familiar and easier to manage.

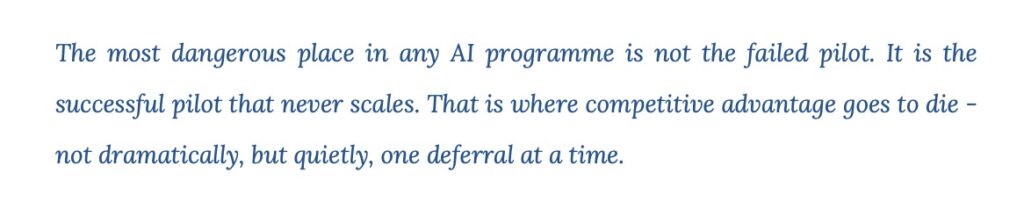

Why the sequence matters more than the solution

One of the most consistent lessons in AI transformation is that the right intervention, applied to the wrong problem (or applied in the wrong sequence) produces results that are functionally indistinguishable from failure.

These conditions are not independent. They form a dependency chain: the value prioritisation question must be answered before decision rights can be designed around the pilots that matter; decision rights must be resolved before data access can be structured around the capabilities that will actually scale; and data and governance must be in place before incentive architecture can be meaningfully redesigned. A diagnosis that stops at one layer produces a strategy that fails at the next.

This is not a theoretical point. It is a recurring pattern in underperforming AI programmes.

The clearest example sits at the portfolio level itself. When candidate use cases are selected before their value contribution has been quantified and compared (e.g. when pilots are prioritised on the basis of visibility, sponsor energy, or ease of delivery rather than financially modelled impact), the organisation commits capital to solving problems that are real but not consequential. The pilots succeed on their own terms. The pain points are genuinely resolved. Yet the aggregate investment fails to shift the metrics the board is actually watching, because the opportunities that would have shifted them were never selected in the first place. No amount of downstream rigour (better governance, better data, better incentives) can recover value that was never on the table.

Governance redesign undertaken before the organisation has clearly identified the specific authority gaps it needs to resolve typically results in frameworks that are technically sound but operationally ineffective. They reflect generic best practice, yet fail to address the actual structural conditions shaping decision-making inside the organisation.

The same pattern appears in data governance. When data frameworks are designed before the priority commercial and operational use cases are clearly defined, they tend to optimise for control and compliance rather than for utility and value creation. They may be robust on paper, but they do not materially improve the organisation’s ability to deploy AI at scale.

A similar issue arises with incentive redesign. If changes are made without a precise understanding of which roles are encountering which adoption barriers, and why, the intervention may appear substantial while delivering little measurable impact. Considerable effort is expended, but movement in the underlying metric remains marginal.

For this reason, the diagnostic should not be seen as a procedural prerequisite — a box to be ticked before the “real work” begins. It is a causal prerequisite.

The quality of the strategic response is inherently constrained by the precision of the diagnosis — and that precision begins with an evidence-based, financially modelled answer to the most elementary question: of everything AI could do for this organisation, what would actually move the needle, and by how much?

An organisation cannot design a governance model that resolves its decision-rights ambiguity unless it has first mapped, with specificity, where that ambiguity sits; which stakeholders hold formal or informal veto power; how escalation currently works; and what conditions cause a promising pilot to stall rather than scale. Nor can it build a portfolio capable of generating defensible board-level returns unless it has first quantified — not assumed — which opportunities carry the greatest value, at what cost, and on what timeline.

Without that level of clarity, strategy risks becoming well-intentioned architecture built on an incomplete understanding. And in AI transformation, incomplete understanding is rarely neutral. It is expensive. Without that level of clarity, strategy risks becoming well-intentioned architecture built on an incomplete understanding. And in AI transformation, incomplete understanding is rarely neutral. It is expensive.

Start with diagnosis

If the quality of the strategic response is constrained by the precision of the diagnosis, then the diagnostic itself deserves more disciplined design than it typically receives. In many organisations, diagnostic work is folded into the opening weeks of a broader transformation engagement, conducted quickly, in parallel with solution design, and under commercial pressure to move to recommendations. The resulting assessment is rarely wrong, but it is frequently imprecise in exactly the places where precision matters most.

A more rigorous approach treats diagnosis as a distinct phase of work with its own scope, its own timeline, and its own deliverables, separated cleanly from the strategy work it is meant to inform. Its scope is intentionally focused. Not because the strategic challenge is simple, but because diagnostic accuracy is lost when organisations new to the approach move too quickly into broad transformation work before the source of constraint has been clearly established.

In practice, a diagnostic capable of answering the question ‘What is actually constraining AI value in this organisation?’ needs to examine the organisation across multiple capability dimensions, and draws on multiple sources of evidence in combination: structured leadership interviews, document and portfolio review, and a variety of other measures. Each source corrects for the limitations of the others. By way of a few examples; interviews surface the informal dynamics that documentation does not capture while documentation reveals the patterns that individual stakeholders cannot see from their own vantage point.

Life sciences organisations operate under constraints that differ materially from those in sectors such as financial services or retail. Regulatory obligations, commercial models, scientific complexity, and cross-functional operating realities all shape how AI can be adopted and scaled. Generic AI transformation models often misread the true sources of constraint in this industry, producing diagnostic conclusions that are directionally plausible but operationally misleading.

What a rigorous diagnostic produces

The output of a well-constructed diagnostic phase is not a report in the conventional sense. It is a decision-grade articulation of the organisation’s actual constraints and one that leadership can act on with confidence, and one that would be difficult to construct through internal assessment alone.

Several things distinguish it from the diagnostic work most organisations have already attempted internally. It is causal rather than descriptive. Most internal assessments catalogue what is happening (e.g.pilot counts, adoption rates, capability inventories). A rigorous diagnostic explains why those patterns exist, which structural conditions are producing them, and which are downstream effects of something else. Without that distinction, strategy optimises around symptoms. It is quantified where quantification is defensible, and disciplined where it is not. The commercial consequences of leaving specific constraints unresolved are modelled in financial terms wherever the evidence supports it. Where it does not, the implications are bounded with transparency rather than dressed up in false precision.

Overstated quantification, in diagnostic work, is a more serious failure than acknowledged uncertainty, and one that boards increasingly recognise. It is externally calibrated. Leadership receives a clear view of where the organisation sits relative to sector peers at comparable levels of AI maturity, not as a ranking, which tends to flatter or alarm without clarifying what to do, but as a reference point that brings context and objectivity to questions that are otherwise assessed entirely from the inside.

It is decision-usable. The findings are structured so that the leadership team can move directly from diagnosis to strategic choice with a shared understanding of which constraints matter most, which are most urgent, and which carry the greatest commercial consequence if left unaddressed. In practice, this is frequently the moment at which an organisation moves from a broad and shared sense of frustration to a precise, aligned understanding of the problem it actually needs to solve.

That shift from felt frustration to diagnostic clarity is, in itself, strategically significant. It is also the point at which the conversation about what to do next becomes meaningfully different from the one the organisation has been having internally.

The organisations that extract the most value from this process

This engagement is not appropriate for every organisation, and it is important to be explicit about where it creates the greatest value.

It is most effective for organisations that have already been investing in AI and AI pilots for 12 to 24 months or longer and are beginning to see a widening gap between the ambition of the programme and the evidence of its impact.

Typically, these are organisations where pilots have been launched, yet production deployment remains below expectation; where adoption is weaker than it should be despite credible investment in change management; and where the financial case for continued investment has become more difficult to defend at the board level, even as leadership remains committed to the broader AI agenda.

The engagement is particularly valuable in situations where the issue is no longer whether AI matters, but why meaningful value is not yet being realised at the rate the organisation expected.

It also requires a leadership team with both the willingness and the authority to confront a diagnosis that may challenge its current interpretation of the problem. In many cases, the findings point beyond the AI programme itself and toward changes in governance, performance management, incentive structures, or operating model design. That level of insight is only useful where there is genuine readiness to act on it.

Organisations seeking confirmation of what they already believe are unlikely to derive full value from the process. Organisations seeking a precise, evidence-based articulation of what they have sensed but have not yet been able to define clearly are often the ones that benefit most.

By contrast, the diagnostic is rarely the right first step for organisations still in the earliest stage of AI exploration. Where the central question is not why existing investment is underperforming, but where and how to begin, the appropriate entry point is the full strategic AI blueprint itself. Organisations at this stage benefit from working the problem in the opposite direction: starting from the commercial outcomes the business is trying to achieve, identifying the AI-enabled capabilities most likely to shift those outcomes, and designing the governance, data, and operating architecture required to deliver them, before capital is committed to a portfolio of pilots that may or may not connect to enterprise value.

This sequence matters. The structural conditions that later constrain value realisation are far less expensive to design into an AI programme at inception than to retrofit once pilots, vendor relationships, and internal expectations have already taken shape. Organisations that begin with a strategic blueprint tend to avoid the diagnostic engagement altogether, not because the structural conditions do not apply to them, but because those conditions were resolved before they had the opportunity to compound.

In either case, the underlying principle is the same: clarity about where the organisation actually is, and what is actually constraining it, has to precede any serious conversation about what it should do next. The form that clarity takes simply differs depending on how far into the work the organisation has already travelled.

From diagnosis to design

Diagnosis, however rigorous, is not strategy. It establishes what is true about the organisation including the specific structural conditions constraining AI value, their commercial implications, and what must change to resolve them. Strategy is the act of translating that understanding into a coherent design for how the organisation will actually operate differently.

The distinction matters because the two are often conflated. Many organisations possess documents labelled “AI strategy” that are in practice either aspirational statements (what we want AI to enable) or implementation plans (what we intend to build). Neither is strategy in the sense that a board requires.

Strategy, properly constructed, sets out the governance model, data architecture, incentive framework, and sequenced portfolio of initiatives that together resolve the diagnosed constraints, supported by financial modelling that ties each element to a quantified contribution to enterprise value.

What distinguishes strategy built on diagnosis from strategy built on aspiration is not sophistication of language or quality of presentation. It is constructability. A strategy built upward from the organisation’s actual constraints, operating realities, and value-creation opportunities describes changes that can be made, in the sequence in which they can be made, by the people who will be required to make them. A strategy assembled from generic transformation assumptions or external best practices, however well-written, describes a destination without describing a route. The practical consequence is that boards are now asking three questions of AI programmes with increasing consistency:

- Where are we, exactly?

- Where are we going, and on what evidence?

- How will we know whether we are on track, and what will trigger intervention if we are not?

These questions cannot be answered convincingly through aspiration, analogy, or benchmark references. They require evidence grounded in the organisation’s own structure, performance realities, and data. An AI strategy that cannot answer them is not a strategy the board can govern against; it is a narrative the board is being asked to accept.

This is the substantive difference between a basic strategy document and a decision framework. The former tells a story. The latter gives leadership a credible basis on which to evaluate progress, govern risk, and commit with confidence to the next phase of investment. In an environment where AI capital allocation is increasingly scrutinised at board and investor level, that distinction is no longer a matter of preference. It is rapidly becoming a condition of continued funding.

A Closing Observation

The life sciences organisations closing the AI value gap most effectively are not, in my experience, those with the largest technology budgets or the most sophisticated vendor ecosystems. More often, they are the organisations that identified the true source of constraint earlier than their competitors, and acted on it with precision, while the rest of the sector was still optimising around symptoms.

That window is narrowing. AI maturity across life sciences is advancing quickly, and the structural issues that remain invisible today do not stay invisible for long. They become visible to boards, investors, and competitors on a timeline measured in quarters rather than years. Organisations that treat this as a strategic discipline now, rather than a programme management challenge to be resolved later, will find themselves with meaningful and compounding advantages by the time the sector catches up. Those that do not will find the cost of remediation considerably higher than the cost of early diagnosis.

For leaders who recognise some version of their own organisation in the patterns described here, the useful next conversation is rarely a broad discussion of AI transformation. It is a focused one about where the specific constraints in their environment actually sit, and what a credible path to resolving them would require.

Request a Diagnostic Sprint

A short, pharma-specific sprint to pinpoint what’s blocking AI value, what it’s costing you, and what to do first.

For more information, contact Dr Andree Bates abates@eularis.com.